Tokenization & Lexical Analysis

1 год

0 Просмотр (ов)

Категория:

Описание:

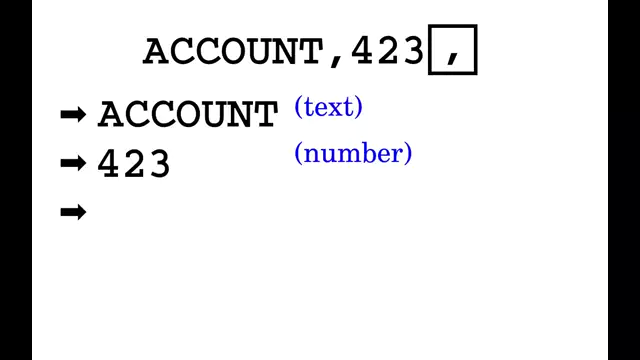

Loading (deserializing) structured input data into computer memory as an implicit chain of tokens in order to prepare subsequent processing, syntactical/semantical analysis, conversion, parsing, translation or execution.